To begin with, the technique consists in using SQL Server partition switching which allows to access and transfer subsets of data quickly and efficiently. In this way, we may need to alter the large table and make it partitioned but in this example we are going to create a new one. Now let's say there is a need to keep only data from the latest three months. Here is the structure of the table 'LogTracker' which will be partitioned shortly after. It is also included a specific column named 'MonthNumber' to save the number of the month which at the same time will be the partition number.

CREATE TABLE [dbo].[LogTracker]( [LogID] [uniqueidentifier] NOT NULL, [CreateDate] [datetime] NULL, [ErrorDesc] [nvarchar](MAX) NULL, [User] [nvarchar](40) NULL, [MonthNumber] AS DATEPART(MONTH, [CreateDate]) PERSISTED NOT NULL CONSTRAINT [PK_LogTracker] PRIMARY KEY CLUSTERED ( [LogID] ASC, [MonthNumber] ASC ) )

CREATE TABLE [dbo].[LogTracker_Temp]( [LogID] [uniqueidentifier] NOT NULL, [CreateDate] [datetime] NULL, [ErrorDesc] [nvarchar](MAX) NULL, [User] [nvarchar](40) NULL, [MonthNumber] AS DATEPART(MONTH, [CreateDate]) PERSISTED NOT NULL CONSTRAINT [PK_LogTracker_Temp] PRIMARY KEY CLUSTERED ( [LogID] ASC, [MonthNumber] ASC ) )

CREATE PARTITION FUNCTION [upf_LogTracker](INT) AS RANGE LEFT FOR VALUES (1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11) GO CREATE PARTITION SCHEME [ups_LogTracker] AS PARTITION [upf_LogTracker] ALL TO ([PRIMARY]) GO

ALTER TABLE dbo.LogTracker DROP CONSTRAINT PK_LogTracker WITH (MOVE TO ups_LogTracker([MonthNumber])) GO ALTER TABLE dbo.LogTracker ADD CONSTRAINT PK_LogTracker PRIMARY KEY ([LogID],[MonthNumber]) GO

INSERT INTO LogTracker SELECT NEWID(), '2016-06-23 13:13:56', 'Error A Desc', 'preyes' INSERT INTO LogTracker SELECT NEWID(), '2016-07-24 04:18:12', 'Error C Desc', 'preyes' INSERT INTO LogTracker SELECT NEWID(), '2016-08-20 01:34:57', 'Error B Desc', 'preyes' INSERT INTO LogTracker SELECT NEWID(), '2016-09-21 08:09:16', 'Error D Desc', 'preyes' INSERT INTO LogTracker SELECT NEWID(), '2016-11-22 05:26:45', 'Error A Desc', 'preyes' INSERT INTO LogTracker SELECT NEWID(), '2016-11-23 15:43:56', 'Error A Desc', 'preyes' INSERT INTO LogTracker SELECT NEWID(), '2016-12-24 23:28:12', 'Error C Desc', 'preyes'

SELECT $PARTITION.[upf_LogTracker](MonthNumber),* FROM LogTracker

With this code we are going to delete the historic data and keep only the most recent three months. It moves out the data of each month from 'LogTracker' to the stage table 'LogTracker_Temp' which in no time is truncated. It starts moving data from the month number one until 'the current month - 3'.

DECLARE @M INT = CASE WHEN DATEPART(MONTH, GETDATE()) - 3 >0 THEN DATEPART(MONTH, GETDATE()) - 3 ELSE 12 + DATEPART(MONTH, GETDATE()) - 3 END DECLARE @PartitionNumberToArchive INT=1 WHILE (@PartitionNumberToArchive<=@M) BEGIN ALTER TABLE dbo.LogTracker SWITCH PARTITION @PartitionNumberToArchive TO dbo.LogTracker_temp TRUNCATE TABLE dbo.LogTracker_temp UPDATE STATISTICS dbo.LogTracker WITH FULLSCAN SET @PartitionNumberToArchive=@PartitionNumberToArchive+1 END

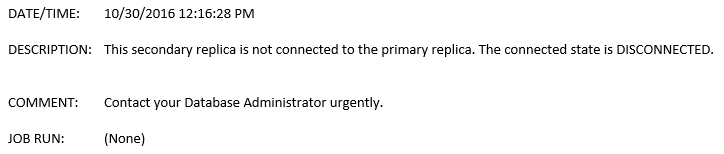

Naturally, the code needs to be scheduled and executed via a SQL Job at the end of each month. To be perfectly honest, not only will this deletion finish in one or two seconds, but also it will not cause any impact on the performance and the Transaction Log will not grow as it does by using traditionally techniques. I hope this post is truly useful for you and you make the most out of it. Let me know any remarks you may have. That is all for now. Thanks for reading.